Conjure Oxide

Welcome to the documentation of Conjure Oxide, a next generation constraints modelling tool written in Rust. It supports models written in the Essence and Essence Prime constraints modelling languages.

Conjure Oxide is in the early stages of development, so most Essence language features are not supported yet. However, it does currently support most of Essence Prime.

This site contains the user documentation for Conjure Oxide; other useful links can be found on the useful links page.

Contributing

The project is primarily developed by students and staff at the University of St Andrews, but we also welcome outside contributors; for more information see the contributor’s guide.

Licence

The Conjure Oxide source and documentation are released under the Mozilla Public Licence v2.0.

Side Projects

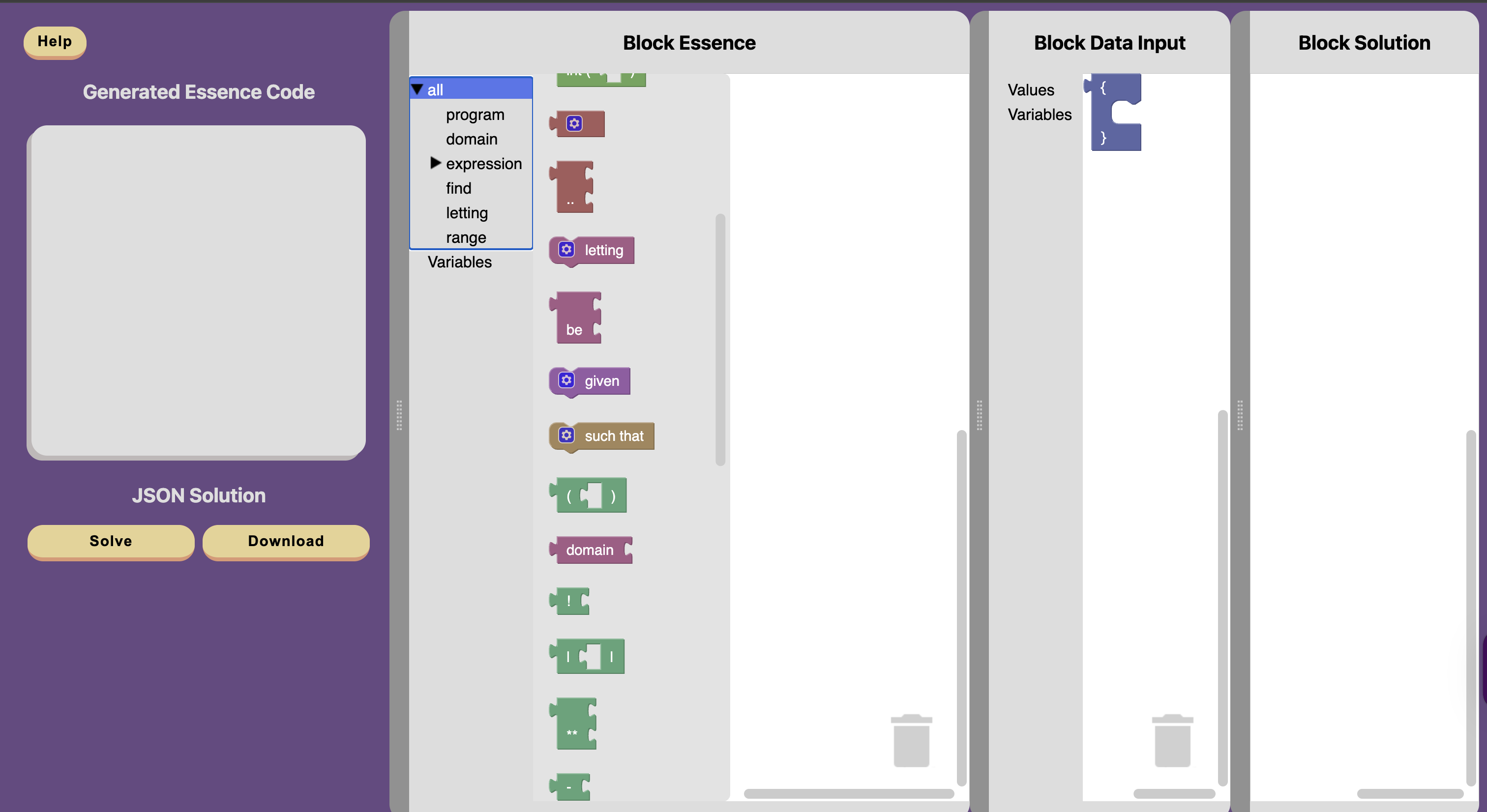

Conjure Blocks

Conjure blocks is an online block editor for Essence. This tool allows you to write constraint programs in blocks, which are then translated into Essence. Conjure-aaS is used to solve these problems. Conjure blocks is an ongoing project.

See the the Conjure Blocks repository, the Conjure Blocks Documentation and the live site for more information.

Useful Links

API Documentation

Internal crates

The following crates are internal, and are re-exported by conjure-cp:

- conjure-cp-cli

- conjure-cp-core

- conjure-cp-essence-macros

- conjure-cp-essence-parser

- conjure-cp-rule-macros

- conjure-cp-enum-compatibility-macro

Code Coverage

Code coverage reports can be found here.

Miscellaneous

For Interested Students

This project is developed as part of the “Artificial Intelligence for Decision Making” Vertically Integrated Project module. For more information on the VIP, click here.

Glossary

-

PR: Pull request. It’s a proposal to merge code changes into the main branch, and allows collaborators to review and suggest modifications before it’s accepted.

-

Branch: A branch contains a different history of code changes (commits). You can start a new branch A’ off of any branch A and push new commits to A’ without affecting the contents of A. Merging branch A’ into A adds all new changes from A’ to A.

-

RFC: Request for Comments. It is a design proposal, often written before or alongside major changes.

-

Essence: A high level constraint modelling language. Essence is declarative, i.e. it states the problem rather than how to solve it. It also lets us model things at the class level.

-

A class-level model (i.e. description of a problem) may have some parameters. To get from a class-level model to an instance model, we provide concrete values for all the parameters.

The following describes a problem class:

given N: int find x such that x < NThe following are instances of that class:

letting N be 3 find x such that x < Nletting N be 42 find x such that x < N

-

-

Conjure: Constraint modelling tool that translates Essence problem descriptions into Essence’ models that constraint solvers can execute.

-

Essence’ (Essence prime): Intermediate representation automatically generated by Conjure

-

Savile Row: It transforms high level Essence’ constraint models and outputs solver-specific model that can be executed. Written in Java.

-

Minion: Constraint solver. It takes low level constraint models (like Savile Row output) and searches for solutions. Specifically, it has its own input language that is extensively documented.

For an in-depth glossary of GitHub terms, see the GitHub Docs Glossary. This Git Cheat Sheet also contains resources about git commands.

Installation

Currently, Conjure Oxide is installed either by building from source or by downloading a nightly release.

Building From Source

Dependencies

The following dependencies are required to build and use Conjure Oxide:

- Conjure including solvers.

- Conjure is currently required for some components of Conjure Oxide. This is something that will change in the future as Conjure Oxide becomes more independent.

- Ensure that Conjure is placed early in your PATH to avoid conflicts with ImageMagick’s

conjurecommand!

- Clang and libclang.

- CMake.

- Rust installed using rustup.

Building

- Clone the repository:

git clone https://github.com/conjure-cp/conjure-oxide.git cd conjure-oxide - Run the install command to install

conjure-oxide(this may take some time):cargo install --path crates/conjure-cp-cli - Verify

conjure-oxideis installed and working by running a command:conjure-oxide --help

Downloading a Nightly Release

- Download the appropriate archive from the latest nightly release.

- Make sure you choose the correct archive for your system. Conjure Oxide currently supports ARM-based macOS (

aarch64-darwin) and x86-based Linux (x86_64-linux-gnu). - Make sure you choose the correct archive containing the dependencies you need:

- If you do not have Conjure or solvers on your system, download the archive for your system ending with

with-solvers. - If you have the solvers on your system but not Conjure, download the archive for your system ending with

with-conjure. - If you have both Conjure and the solvers on your system, download the archive for your system ending with

standalone.

- If you do not have Conjure or solvers on your system, download the archive for your system ending with

- Make sure you choose the correct archive for your system. Conjure Oxide currently supports ARM-based macOS (

- Extract the archive using your preferred method.

- Open a terminal in the extracted directory and run a test command:

./conjure-oxide --help

- If you are on macOS, you may run into a problem with binaries being blocked from running. If this is the case, run the following command in the extracted directory:

xattr -dr com.apple.quarantine . - If you would like these commands to be available everywhere on your system, copy the binaries into a directory which is in your PATH.

Troubleshooting

Unknown command: conjure-oxide

Check the path at which cargo install places binaries (see cargo-install(1)) and ensure it’s in your PATH environment variable. You may need to restart your shell for it to pick up these changes.

ImageMagick Conflicts

If the conjure command that is part of ImageMagick conflicts with your Conjure installation, ensure that the directory containing Conjure binaries is earlier in your PATH than the directory containing ImageMagick binaries.

macOS Quarantine

If you are running into problems on macOS, make sure you remove the quarantine attribute from all pre-built binaries using the following command in their containing directory:

xattr -dr com.apple.quarantine .

Quick Start Guide to Running your first Essence Model

This guide walks you through running your first Essence model with Conjure Oxide.

Your First Problem

Create a file called my_problem.essence with the following content:

find x : int(1..3)

find y : int(2..5)

such that x > y

If you are curious about more complex models, you can check out the models that we use to test Conjure Oxide, available in the tests-integration/tests/integration directory of the repository.

Running with Different Solvers

SAT Solver

--sat-encoding specifies the encoding strategy used by the SAT solver. This affects performance and solution structure.

Supported options:

log(default)directorder

Default usage (uses log):

cargo run -- solve --solver sat my_problem.essence

Specify an encoding:

cargo run -- solve --solver sat --sat-encoding direct my_problem.essence

Warning

Currently, running the command above will cause the following error:

model invalid: Only Boolean Decision Variables supportedThis because the SAT option for the solver argument only currently enables the base (boolean) rule set and does not specify an integer SAT ruleset to include. This is something we are currently working on, and should be resolved soon.

Minion Solver

cargo run -- solve --solver minion my_problem.essence

Expected output for both solvers:

Solutions:

[

{

"x": {

"Int": 3

},

"y": {

"Int": 2

}

}

]

Understanding What Happened

Conjure Oxide transformed your high-level Essence model through several steps:

- Parsing - Your Essence file was parsed into an internal AST

- Rule Application - Backend-specific rules transformed the model

- Solving - The transformed model was sent to the solver

- Solution Extraction - The solver’s output was converted back to Essence format

Want to see exactly what rules were applied? Check out the Logging guide.

Functional Programming Style

For developers who come from programming languages like Scala or Haskell, or those who favour a functional programming style, we have a Functional Rust guide that you might find useful.

Logging

This document is a work in progress - for a full list of logging options,

see Conjure Oxide’s --help output.

To stderr

Using --verbose, and the RUST_LOG environment variable, you can control the

contents, and formatting of, Conjure Oxide’s stderr output:

-

--verbosechanges the formatting of the logs for improved readability, including printing source locations and line numbers for log events. It also enables the printing of the log levelsINFOand above. -

The

RUST_LOGenvironment variable can be used to customise the log levels that are printed depending on the module . For more information, see: https://docs.rs/env_logger/latest/env_logger/#enabling-logging.

Example: Logging Rule Applications

Different log levels provide different information about the rules applied to the model:

-

INFOprovides information on the rules that were applied to the model. -

TRACEadditionally prints the rules that were attempted and why they were not applicable.

To see TRACE logs in a pretty format (mainly useful for debugging):

$ RUST_LOG=trace conjure-oxide solve --verbose <model>

Or, using cargo:

$ RUST_LOG=trace cargo run -- solve --verbose <model>

Example: Tracing SAT Solver Rules

When working with the SAT solver, you can trace the complete transformation pipeline:

RUST_LOG=TRACE cargo run -- solve --solver sat my_problem.essence --verbose

This will show:

- Integer-to-boolean conversions

- Operation transformations

- Tseytin transformations

- All rules that were tried and applied

For more detailed testing output (including JSON traces and rewritten models), run specific tests:

cargo test <test_name>

This generates .json and .txt files containing rule traces, parsed Essence, solutions, and the rewritten model.

Contributors Guide

We love your input! We want to make contributing to this project as easy and transparent as possible, whether it’s:

- Reporting a bug

- Discussing the current state of the code

- Submitting a fix

- Proposing new features

- Becoming a maintainer

Contributing

This project is open-source: you can find the source code on Github, and issues, questions, or feature requests can be posted on the Github issue tracker.

Currently, Conjure Oxide has been primarily developed by students and staff at the University of St Andrews. That being said, we welcome and encourage contributions from individuals outside the University. Over the course of this section, we will expand further into how you can contribute.

For more detailed information regarding our contributing process, please refer to CONTRIBUTING.md

Licence

This project is entirely open-source, with all code released under the MPL 2.0 license, unless stated otherwise.

This section had been adapted from the ‘home’ page of the conjure-oxide wiki, and CONTRIBUTING.md

Onboarding

Congratulations you are joining a very exciting project, pushing forward the field of Constraint Programming. However, there is a lot to learn before you are up to speed. Expect to spend the first few weeks on the project learning, and so do not rush starting to contribute right away.

Here are some tips for starting out.

What You Need to Learn

The Conjure-Oxide project fundamentally involves the development of a constraint modelling tool in the Rust programming language. Therefore, learning about constraint programming and Rust will be your initial challenges. Getting a solid understanding of Rust and constraints now will stand you in good stead for the rest of the project so do not worry that spending time learning is not productive, this learning is expected.

Constraint Programming

Constraint programming is a paradigm for solving combinatorial problems. Instead of defining an algorithm to solve a complex problem, we instead model it as a constraint satisfaction problem (CSP), and let one of many optimised solver programs solve it from there.

Whilst not essential to be a fully-fledged constraint programmer it is useful to understand roughly how this works. There are good video lectures online that would be worth a watch for a general understanding, such as the CP Summer School lectures.

Conjure-Oxide uses the input language Essence. Getting an understanding of how to code in Essence will be really useful when it comes to debugging and testing anything. Reading through Essence’s documentation will be useful for this. Another very useful method of learning Essence and some aspects of constraint programming is to explore the selection of example Jupyter notebooks.

Conjure-Oxide is built to fulfil a similar role to Conjure. Conjure is the current constraint modelling tool built in Haskell, which we are basing a lot of our implementation around. Understanding a little bit about Conjure will be useful for you, as you may find yourself needing to refer to Conjure’s source code later in your project.

Rust

If you do not currently know how to program in Rust, this is very important to learn to contribute to most parts of the project.

The best resource for learning Rust is The Rust Programming Language book, alongside the Rustlings resource. Rustlings will give you exercises to practice what you learned in the book as you go. If you find the book slow-going there are also great video tutorials available on YouTube that cover the book’s content. Whilst both resources are fantastic, you will most likely not find it necessary to complete them in their entirety. However, I would recommend you complete the Rustlings exercise 19_smart_pointers to understand concepts such as Box and Cow, as these are used heavily throughout Conure-Oxide’s codebase.

Understanding the Codebase

Once you have enough of an understanding to read Rust code you should start reading through Conjure-Oxide’s codebase. This is a large complex codebase, and it is likely that it will not all make sense initially. A good method of understanding the codebase is to follow the control flow of the program and try to understand why each step occurs. There will be areas of the code you do not need to understand the inner-workings of as a new user, and so gaining a full holistic understanding from the outset is not necessary. Instead focus on getting a good general understanding of Conjure-Oxide as a whole, including what each step in process does and where in the codebase it is located.

This is a fundamentally cooperative project, and so do not be afraid to ask for help from a more experienced member of the team if you are struggling to understand an important part of the code. Feel free to reach out through our GitHub Discussions page for help whenever you need it.

This stage will take a while, but understanding the core of the program will be tremendously helpful when you start implementing it.

Selecting a Project

Once you have spent time learning you will feel ready to select a project and begin contributing. There are lots that can be done in Conjure-Oxide, so if you have an idea of a project that is great, but also consider asking on our GitHub Discussions page for ideas, because there are many tasks new starts could reasonably achieve. Do not feel rushed into selecting a project. Having a good understanding of the system before you start writing code, will really facilitate your productivity later in the semester.

Selecting a Starter Project

Whilst you are familiarising yourself with the codebase, you may want to try tackling a couple smaller starter projects. There are always little issues in the codebase needing addressed and working on one of these, while you learn, can be a fantastic way to get a confidence boost from feeling productive. These issues are specifically marked with good first issue on GitHub. However, be aware that taking on a wide project with a lot of reach, even if small, can be very challenging for a new start, as it requires deeply understanding many different areas of the code.

Selecting a Project Early to Help Learn

If the scale of the codebase is feeling overwhelming, then consider talking to the project lead @ozgurakgun and selecting a project earlier on. The project would not need to be well defined at this point but even if you are not initially implementing features, having a project in mind can help focus your learning of the codebase. This narrows the scope of what you try to understand and helps make the codebase less overwhelming. However, you must ensure you still a good general understanding of Conjure-Oxide as a whole, or this will make expanding your project harder in the future.

The Most Important Things

You should realise that it is okay to spend time learning and not contributing, and you should not feel bad, it is expected. If you get a good understanding now it will help you in the future.

Also ensure to ask for help and talk to the rest of the team, using the GitHub Discussions. There will be people on the project who have done it for years now. Use their expertise.

Setting up your development environment

Conjure Oxide supports Linux and Mac.

Windows users should install WSL and follow the Linux (Ubuntu) instructions below:

Linux (Debian/Ubuntu)

The following software is required:

- The latest version of stable Rust, installed using rustup.

- A C/C++ compilation toolchain and libraries:

- Debian, Ubuntu and derivatives:

sudo apt install build-essential libclang-dev - Fedora:

sudo dnf group install c-developmentandsudo dnf install clang-devel

- Debian, Ubuntu and derivatives:

- Conjure.

- Ensure that Conjure is placed early in your PATH to avoid conflicts with ImageMagick’s

conjurecommand!

- Ensure that Conjure is placed early in your PATH to avoid conflicts with ImageMagick’s

- Z3, one of the solver backends, requires a separate install. Using a package installer (e.g.

apt), install Z3.

MacOS

The following software is required:

- the latest version of stable Rust, installed using rustup.

- an XCode Command Line Tools installation (installable using

xcode-select --install) - CMake:

brew install cmake(for SAT solving) - Conjure.

- Z3, one of the solver backends, requires a separate install. Using a package installer, install Z3 (e.g. for

Homebrewrunbrew install z3).

If you are having issues with Z3, you may need to update

~/.cargo/config.tomlto ensure theZ3_LIBRARY_PATH_OVERRIDEandZ3_SYS_Z3_HEADERenvironment variables are pointed to the right library path andz3.hfile that you installed.

St Andrews CS Linux Systems

-

Download and install the pre-built binaries for Conjure. Place these in

/cs/home/<username>/usr/binor elsewhere in your$PATH. -

Install

rustupand the latest version of Rust throughrustup. The school provided Rust version does not work.- By default,

rustupinstalls to your local home directory; therefore, you may need to re-installrustupand Rust after restarting a machine or when using a new lab PC.

- By default,

-

Install

z3, one of the solver backends. You may have to build it from source, and then add the binary to your path.

To add a binary to your PATH in a way that persists every time you log out, run:

mkdir -p /cs/home/$USER/.paths.d`

echo ~/Documents/... > /cs/home/$USER/.paths.d/z3

// where the "..." is the path to your compiled z3 binary

Improving Compilation Speed

Installing sccache improves compilation speeds of this project by caching crates and C/C++ dependencies system-wide.

- Install sccache and follow the setup instructions for Rust. Minion detects and uses sccache out of the box, so no C++ specific installation steps are required.

This section had been taken from the ‘Setting up your development environment’ page of the conjure-oxide wiki

How We Work

This is our Constitution, but in contrast to a real constitution of a company we intend this document to be a living document. Please feel free to suggest edits and improvements.

Principles

- Assume good faith.

- Take responsibility for your work.

- Look for opportunities to help others.

- Make it easy for others to help you.

Social contract

We agree to adhere to the following guidelines when engaging in group work.

- We communicate in English as a common, shared language.

- We include all group members in meetings, group chats, messages, and social events.

- We turn up on time to meetings.

- We pay attention and contribute to group discussions.

- We contribute to the group tasks.

- We listen to each other’s opinions.

- We share ideas and do not disregard other people’s thoughts or feelings.

- We are respectful towards one another and treat each other with care and courtesy.

- We complete tasks on time and to the best of our ability.

- We do not make assumptions about individual abilities.

Logistics

- GitHub: We use GitHub as our main platform for code collaboration and version control.

- Teams: We use Teams for day to day communications.

- Weekly report: We use a web form to provide an update to the supervisors every week. This is not assessed and doesn’t strictly follow the reflective writing methods. However, this resource on the Gibbs reflective cycle may still be helpful when approaching the weekly form and how to use the form effectively.

- Weekly meetings: The whole group meets once a week. Before this meeting everyone fills in the weekly report. We take minutes at these meetings.

Best Practices for Collaboration

1. Start with Early Pull Requests (PRs)

- Don’t wait until everything is perfect; submitting early PRs allows the team to provide feedback sooner. This helps catch potential issues early and encourages collaboration.

- Even if your code isn’t complete, opening a draft PR can be a good way to start discussions and ensure alignment.

2. Show, Don’t Tell

- Share your experiences and insights! Use GitHub or Microsoft Teams to share updates, challenges, and breakthroughs with the rest of the team.

- Showing real examples means other can more easily help you, it can help others understand your approach and may inspire new ideas.

- High-level explanations can inadvertently hide important details. Showing the actual artefact (whether it’s code, a design document or something else) together with your high-level commentary eliminates this risk.

3. Begin with Specifications

- Before you start coding, document your plan. Write out specifications or create a rough outline of what you’re working on, including examples where possible.

- Having a clear plan makes it easier to get feedback and helps others understand the purpose and scope of your work.

4. Share Failures as Much as Successes

- When something doesn’t work, share it! Learning from mistakes and discussing setbacks is invaluable.

- A failed attempt can often be as educational as a successful one, and it may prevent others from encountering the same issue.

5. Ask Good Questions

- Don’t hesitate to reach out when you’re stuck, but try to ask thoughtful, specific questions.

- Providing context and sharing what you’ve tried will help others give more helpful answers.

Final Thoughts

- Collaboration is key to our success, so feel free to communicate openly.

- Continuous improvement is encouraged—keep looking for ways to improve your code, processes, and knowledge.

This section had been taken from the ‘How we work’ page of the conjure-oxide wiki

Pull Requests

Tip

We Use Github Flow, so All Code Changes Happen Through Pull Requests

Our development process is as follows:

- Make a fork.

- Create a branch on your fork, do not develop on main.

- Create a pull request as soon as you want others to be able to see your progress, comment, and/or help:

- Err on the side of creating the pull request too early instead of too late. Having an active PR makes your work visible, allows others to help you and give feedback. Request reviews from people who have worked on similar parts of the project.

- Keep the PR in draft status until you think it’s ready to be merged.

- Assign PR to reviewer(s) when it’s ready to be merged.

- Only Oz (@ozgurakgun) can merge PRs, so add him as a reviewer when you want your PR to be merged.

- During reviewing, avoid force-pushing to the pull request, as this makes reviewing more difficult. Details on how to update a PR are given below.

- Once Oz has approved the PR:

- Update your PR to main by rebase or merge. This can be done through the Github UI or locally.

- Cleanup your git history (see below) or request your PR to be squash merged.

Style

- Run

cargo fmtin the project directory to automatically format code - Use

cargo clippyto lint the code and identify any common issues

See: [[Documentation Style]] and [[Rust Coding Style]] (TODO)

Commit and PR Titles

We use Semantic PR / Commit messages.

Format: <type>(<scope>): <subject>

(<scope> is optional)

Example

feat(parser): add letting statements

^--^ ^----^ ^--------------------^

| | |

| | +--> Summary in present tense.

| |

| +--> Area of the project affected by the change.

|

+-------> Type: chore, docs, feat, fix, refactor, style, or test.

Types

feat: new features for the end userchore: changes to build scripts, CI, dependency updates; does not affect production codefix: fixing bugs in production codestyle: purely stylistic changes to the code (e.g. indentation, semicolons, etc) that do not affect behaviourrefactor: changes of production code that do not add new features or fix specific bugstest: adding, updating, or refactoring test codedoc: adding or updating documentation

PR Messages

Your pull request should contain a brief description explaining:

- What changes you are making

- Why they are necessary

- Any significant changes that may break other people’s work

Additionally, you can link your PR to an issue. For example: closes issue #42.

Amending your PR and Force Pushes

You should avoid rebasing, amending, and force-pushing changes during PR review. This makes code review difficult by removing the context around code review comments and changes to a commit.

The recommended way to update PRs is to use git’s built-in support for fixups.

To make a change to a commit (e.g. addressing a code review comment):

git commit --fixup <commit>

git push

Once your PR is ready to merge, these fixup commits can be merged into their original commits like so:

git rebase --autosquash main

git push --force

We have CI checks to block accidental merging of fixup! commits.

See:

- https://rietta.com/blog/git-rebase-autosquash-code-reviews/

- https://git-scm.com/docs/git-commit#Documentation/git-commit.txt---fixupamendrewordltcommitgt

Before your PR is merged

When your PR is approved, you may need to rebase your branch onto main before it can be merged. Rebasing essentially adds all the latest commits from main to your branch if it has fallen behind main.

To do this:

-

Make sure that your

mainbranch is synced to the main repo -

Switch to the branch you’re making the PR from

-

Do:

git rebase main git push --force

(Optional) Cleaning up your Git history

Additionally, if you are proficient with git, you can use interactive rebase to clean up your commit history. This allows you to reorder, drop, or amend commits arbitrarily.

See:

There are some GUI tools to help you do that, such as the GitHub Client, GitKraken, various VS Code extensions, etc.

Warning

Interactive rebase and force-pushing overwrites your git history, so it can be destructive. This is also not a requirement!

Squashing PRs

Alternatively, you can ask for the PR to be “squashed”. This combines all your commits into one merge commit. Squashing PRs helps keep the commit history on main clean and logical without requiring you to go back and manually edit your commits!

What we didn’t do

The intention of this page is to chronicle decisions made during the development of conjure-oxide. There have been many situations where we have thought of something interesting that ends up not being the best solution to a problem and we believe others may come up with the same ideas. This page should be read before contributing so that potential contributors do not go down rabbit holes that have already been thoroughly searched.

Nested expressions within the polymorphic metadata field

Background

As discussed in Issue 182, we wanted to create a polymorphic metadata field that would be contained within the expression struct and that could be changed on a case-by-case basis as metadata might only be needed for a single module for example.

What we thought of

One interesting idea that was suggested as a structure like this:

#![allow(unused)]

fn main() {

// Define a trait for metadata in each module.

pub trait Metadata {

// Define methods specific to the metadata.

fn print_metadata(&self);

// Add other methods as needed.

}

// Module-specific metadata types.

pub mod module1 {

pub struct Metadata1 {

// Define fields specific to this metadata.

pub clean: bool,

// Add other fields as needed.

}

impl super::Metadata for Metadata1 {

fn print_metadata(&self) {

println!("Metadata1: Clean - {}", self.clean);

}

}

}

pub mod module2 {

pub struct Metadata2 {

// Define fields specific to this metadata.

pub status: String,

// Add other fields as needed.

}

impl super::Metadata for Metadata2 {

fn print_metadata(&self) {

println!("Metadata2: Status - {}", self.status);

}

}

}

// Modify the Expression enum to hold a trait object for metadata.

#[derive(Clone, Debug)]

pub enum Expression {

// Existing enum variants here...

WithMetadata(Box<Expression>, Option<Box<dyn Metadata>>),

}

impl Expression {

// Create a new expression with metadata.

pub fn with_metadata(expr: Expression, metadata: Option<Box<dyn Metadata>>) -> Expression {

Expression::WithMetadata(Box::new(expr), metadata)

}

// Extract the expression and metadata.

pub fn extract_metadata(self) -> (Expression, Option<Box<dyn Metadata>>) {

match self {

Expression::WithMetadata(expr, metadata) => (*expr, metadata),

_ => (self, None),

}

}

// Set metadata for an expression.

pub fn set_metadata(&mut self, metadata: Option<Box<dyn Metadata>>) {

if let Expression::WithMetadata(_, ref mut existing_metadata) = *self {

*existing_metadata = metadata;

}

}

// Get metadata for an expression.

pub fn get_metadata(&self) -> Option<&dyn Metadata> {

if let Expression::WithMetadata(_, Some(metadata)) = self {

Some(metadata.as_ref())

} else {

None

}

}

// Existing methods here...

}

}Why we didn’t do it

This is nice in the sense that there is less “ugliness” when creating expressions as there is no need to have metadata in every enum variant. However, this severely effects the way that the rewriter traverses the AST as there is now WithMetadata objects sprinkled throughout the AST which would require some way to traverse up the tree while saving the context of nodes below the metadata object. This image shows the differences in a basic AST:

What we did do

Due to this issue we decided that a simpler implementation where metadata is explicitly required every time but could be set to some empty metadata object was more practical. More details on this implementation can found in Near Future PR

This section had been adapted from the ‘What we didn’t do’ page of the conjure-oxide wiki

Writing Documentation

Creating Documentation

As an organisation, we want Conjure Oxide to be thoroughly documented so that new members of our growing team can integrate smoothly. Whether you are currently on a project or not, contributing to our documentation is essential to achieving this goal.

To make creating and implementing documentation as smooth as possible, we ask that you follow the workflow as outlined below.

Documentation Work Flow

-

Identify the documentation you will write.

In many cases, documentation contributors will be writing about projects they are currently working on or have already completed. However, there are still plenty of opportunities to contribute to documentation, even if you are not actively involved in a project or are seeking a small side project. In such cases, please refer to issue #1334, which tracks all documentation writing tasks. All unassigned child issues are available for anyone to work on!

You may come across instances where important or useful documentation is missing from this book. If you notice such gaps and would like to address them as a side project, we encourage you to do so!

-

If the issue is not already present, open a child issue on our documentation tracking issue #1334.

Make the title of this issue the name of the documentation you will be writing.

-

Link your child issue to a pr request.

File type and naming convention

All documentation for the Conjure Oxide book should be written in markdown. If you’re unfamiliar with markdown or need a refresher on its syntax, we recommend reviewing this guide.

When naming your documentation file, match the file name as closely as possible to the heading of the documentation and use underscores (‘_’) to replace spaces. For example, if your documentation page is titled “Creating Documentation,” name the file

creating_documentation.md.Where to place documentation

Place all documentation files in an appropriate location within the

/docs/srcdirectory. Each markdown file should be stored in the directory that matches its section in the documentation structure. For example, this file is located at/docs/src/developers_guide/contributors_guide/.If you know where your documentation belongs in the book, please place it in the appropriate directory. However, it’s not required to know the exact location—moving files is quick and easy. If you’re unsure where your documentation should go, place the file in

/docs/src/miscso it can be relocated later to a more suitable section.Viewing documentation on the book

You must ensure that the documentation you write formats as expected in the book. To do so, follow these steps:

- Open

/docs/src/SUMMARY.md. - If you have a definite location for your documentation, link the documentation in the appropriate section using the following format:

[ Section title ](path/to/file). - If you are unsure where your documentation should reside, place the documentation at any location, but remember to delete this link once you’re happy with your document’s formatting.

- In your terminal, ensure that you are in the

/docsdirectory and type in the following commandmdbooks serve --open

Important

Be sure to assign yourself and any other contributors working on the documentation to your child issue and pull request. This helps us keep track of who is responsible for each piece of documentation and ensures proper assignment.

- Open

-

Once you are happy with your documentation, request a review from one or more other members of your team.

Important

Place any contributors to the documentation and the date where the documentation was last updated in a comment on the first and second lines of the file. For instance:

[//]: # (Author: Jamie Melton)[//]: # (Last Updated: 09/12/2025)has been placed at the top of this file.

-

When your team is happy with this documentation, request a review from the current book editors.

The current book editors are: JamieASM and HKhan-5.

-

Assuming no adjustments are required, the documentation will then be merged in, and the child issue will be closed.

What is Vale? How does Conjure-Oxide ensure that our documentation is high quality and consistent?

Vale is a prose linter. Think of it like clippy, but for documentation style, spelling, terminology, and consistency.

At a high level, Vale works by:

- Reading a configuration file (

.vale.ini). - Loading one or more styles/rules.

- Scanning matching files (Markdown in our case).

- Emitting alerts (

suggestion,warning,error).

In this repository, Vale is primarily used for documentation quality checks under docs/.

How this repository configures Vale

Our project config lives in /.vale.ini and currently contains:

StylesPath = tools/vale_stylesVocab = conjure_vocab[*.md]+BasedOnStyles = Conjure

What this means:

- StylesPath tells Vale where our local style definitions and vocabulary live.

- Vocab enables the project vocabulary at:

tools/vale_styles/config/vocabularies/conjure_vocab/accept.txt

- BasedOnStyles = Conjure enables the rules in our

Conjurestyle for Markdown files.

For vocabulary behavior and format details, see Vale’s vocabulary docs: Vocabularies.

Fixing PR lint failures by updating the dictionary

When Vale flags a term that is valid for this codebase (domain-specific term, acronym, tool name, etc.), add it to:

tools/vale_styles/config/vocabularies/conjure_vocab/accept.txt

File format rules

accept.txt supports one regex entry per line.

- Lines beginning with

#are comments. - Entries are regex patterns.

- Case sensitivity matters unless you explicitly make a pattern case-insensitive.

Examples:

- Case-insensitive whole term:

(?i)API - Character-class style:

[Jj]son - Optional suffix:

Biplates?

Practical workflow for a failing PR

- Read the Vale error in the PR checks.

- Decide whether the word should be:

- corrected in the doc text, or

- accepted as project vocabulary.

- If it should be accepted, add a new entry to

accept.txt. - Keep the entry as narrow/specific as possible to avoid false positives.

- Commit and push; CI will re-run automatically.

When not to add something to accept.txt

Do not add entries that are just typos or inconsistent wording.

The vocabulary should represent intentional project terminology, not bypass style checks globally.

Good candidates:

- Domain terms (

SATInt,Uniplate,Savile). - Tool names (

rustc,Valgrind). - Project-specific identifiers (

conjure_essence_parser). - Variable names from the code that you might need to refer to.

Bad candidates:

- Accidental misspellings.

- One-off casing mistakes that should be corrected in source text.

Still unsure?

If you have any questions or concerns, please post them on the documentation discussion board. A book editor will respond to you promptly. Likewise, if you have suggestions for improving or streamlining this process, feel free to share your ideas with the book editors! Your feedback is always welcome.

“Omit needless words”

(c) William Strunk

Key Points

Resources

See:

Do’s

Our documentation is inline with the code. The reader is likely to skim it while scrolling through the file and trying to understand what it does. So, our doc strings should:

- Be as brief and clear as possible

- Ideally, fit comfortably on a standard monitor without obscuring the code

- Contain key information about the method / structure, such as:

- A single sentence explaining its purpose

- A brief overview of arguments / return types

- Example snippets for non-trivial methods

- Explain any details that are not obvious from the method signature, such as:

- Details of the method’s “contract” that can’t be easily encoded in the type system

(E.g: “The input must be a sorted slice of positive integers”; “The given expression will be modified in-place”)

- Non-trivial situations where the method may

panic!or cause unintended behaviour(E.g: “Panics if the connection terminates while the stream is being read”)

- Any

unsafethings a method does(E.g: “We type-cast the given pointer with

mem::transmute. This is safe because…”) - Special cases

- Details of the method’s “contract” that can’t be easily encoded in the type system

Things to Avoid

Documentation generally should not:

- Repeat itself

- Use long, vague, or overly complex sentences

- Re-state things that are obvious from the types / signature of the method

- Explain implementation details

- Explain high-level architectural decisions (Consider making a wiki page or opening an RFC issue instead!)

And finally… Please, don’t ask ChatGPT to document your code for you! I know that writing documentation can be tedious, but you can always:

- Write a one-sentence doc string for now and come back to it later

- Ask others if you don’t quite understand what a method does

Types and Tests are Documentation

Documentation is great, but we should also use the type system and other rust features to our advantage!

-

A lot of things (e.g: error conditions, thread safety, state) can be encoded in the types of arguments / return values. This is usually better than just

panic!-ing and adding a doc string to explain why. -

Tests are also a great way to illustrate the behaviour of your code and any special cases - and they also help with catching bugs!

Example Snippets

For non-trivial methods and user-facing API’s, it may be useful to include an example. Examples should be minimal but complete snippets of code that illustrate a method’s behaviour.

If you wrap your example in a code block:

```rust ... ```

Our CI will even run it and complain if the example does not compile / contains an error!

However, don’t feel obliged to include an example for every method! For simple methods they may not be necessary.

Examples

⚠️ Good but a bit wordy:

#![allow(unused)]

fn main() {

/// Checks if the OPTIMIZATIONS environment variable is set to "1".

///

/// # Returns

/// - true if the environment variable is set to "1".

/// - false if the environment variable is not set or set to any other value.

fn optimizations_enabled() -> bool {

match env::var("OPTIMIZATIONS") {

Ok(val) => val == "1",

Err(_) => false, // Assume optimizations are disabled if the environment variable is not set

}

}

}✅ Since everything else is obvious from the signature, we can just say:

#![allow(unused)]

fn main() {

/// Checks if the OPTIMIZATIONS environment variable is set to "1"

fn optimizations_enabled() -> bool { ... }

}⚠️ Not bad, but sounds a bit robotic

# Side-Effects

- When the model is rewritten, related data structures such as the symbol table (which tracks variable names and types)

or other top-level constraints may also be updated to reflect these changes. These updates are applied to the returned model,

ensuring that all related components stay consistent and aligned with the changes made during the rewrite.

- The function collects statistics about the rewriting process, including the number of rule applications

and the total runtime of the rewriter. These statistics are then stored in the model's context for

performance monitoring and analysis.

✅ Same idea but shorter

# Side-Effects

- Rules can apply side-effects to the model (e.g. adding new constraints or variables).

The original model is cloned and a modified copy is returned.

- Rule engine statistics (e.g. number of rule applications, run time) are collected and stored in the new model's context.

⚠️ A bit too detailed

# Parameters

- `expression`: A reference to the [`Expression`] that will be evaluated against the given rules. This is the main

target for rule transformations and is expected to remain unchanged during the function execution.

- `model`: A reference to the [`Model`] that provides context for rule evaluation, such as constraints and symbols.

Rules may depend on information in the model to determine if they can be applied.

- `rules`: A vector of references to [`Rule`]s that define the transformations to be applied to the expression.

Each rule is applied independently, and all applicable rules are collected.

- `stats`: A mutable reference to [`RewriterStats`] used to track statistics about rule application, such as

the number of attempts and successful applications.

✅ Just describing the meaning of arguments will do

(Details of the rewriting process belong on the wiki, and details of underlying types such as Model or Expression are already documented next to their implementations)

- `expression`: A reference to the [`Expression`] to evaluate.

- `model`: A reference to the [`Model`] for access to the symbol table and context.

- `rules`: A vector of references to [`Rule`]s to try.

- `stats`: A mutable reference to [`RewriterStats`] used to track the number of rule applications and other statistics.

CI/CD

Code Coverage

User Guide

-

Run coverage with

./tools/coverage.sh. -

Open this in a web-browser by opening

target/debug/coverage/index.html. -

An

lcovfile is generated attarget/debug/lcov.infofor in-editor coverage.- If you use VSCode, configuration for this should be provided when you clone the repo. Click the “watch” button in the status bar to view coverage for a file.

Implementation

See the code for full details: tools/coverage.sh.

A high level overview is:

- The project is built and tested using instrumentation based coverage.

- Grcov is used to aggregate these reports into

lcov.infoandhtmlformats. - The

lcov.infofile can be used with thelcovcommand to generate summaries and get coverage information. This is used to make the summaries in our PR coverage comments.

Reading:

- grcov README - how to generate coverage reports.

- rustc book - details on instrumentation based coverage.

Doc Coverage

Text: This prints a doc coverage table for all crates in the repo:

RUSTDOCFLAGS='-Z unstable-options --show-coverage' cargo +nightly doc --workspace --no-deps

JSON: Although we don’t use it yet, we can get doc coverage information as JSON. This will be useful for prettier and more useful output:

RUSTDOCFLAGS='-Z unstable-options --show-coverage --output-format json' cargo +nightly doc --workspace --no-deps

Reading

See the unstable options section of the rustdoc book. Link.

This section had been taken from the ‘Coverage’ page of the conjure-oxide wiki

Github Actions Cookbook

- This document lists common patterns and issues we’ve had in our Github actions, and practical solutions to them.

- The first point of reference should be the official documentation, but if that is ever unclear, here would be a good place to look!

Terminology used in this document:

- A workflow contains multiple independently ran tasks. Each task runs a series of steps. Steps can call predefined actions, or run shell commands.

I want to have a step output multilined / complex text

- name: Calculate PR doc coverage

id: prddoc

run: |

RUSTDOCFLAGS='-Z unstable-options --show-coverage' cargo +nightly doc --workspace --no-deps > coverage.md

echo 'coverage<<EOFABC' >> $GITHUB_OUTPUT

echo "$(cat coverage.md)" >> $GITHUB_OUTPUT

echo 'EOFABC' >> $GITHUB_OUTPUT```

The entire output of cargo doc can be substituted into later jobs by using ${{ steps.prdoc.outputs.coverage }}

workflow_run: I want a workflow that runs on a PR and can write to the repo

PR branches and their workflows typically live in on a branch on an external fork. Therefore, they cannot write to the repository. The solution is to split things into two workflows - one that runs on the PR with read-only permissions, and one that runs on main and can write to the repository. This is called a workflow_run workflow. Read the docs.

The workflow_run workflow should not run any user provided code as it has secrets in scope.

I want to access the calling PR in a workflow_run workflow

workflow_run jobs do not get access to the calling workflows detail. While one can access some things via the Github API such as head_sha, head_repo, this may not give any PR information. Github recommends saving the PR number to an artifact, and using this number to fetch the PR info through the API:

Example from this github blog post - see this for more explanation and details!

name: Receive PR

# read-only repo token

# no access to secrets

on:

pull_request:

jobs:

build:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2

# imitation of a build process

- name: Build

run: /bin/bash ./build.sh

- name: Save PR number

run: |

mkdir -p ./pr

echo ${{ github.event.number }} > ./pr/NR

- uses: actions/upload-artifact@v2

with:

name: pr

path: pr/

name: Comment on the pull request

# read-write repo token

# access to secrets

on:

workflow_run:

workflows: ["Receive PR"]

types:

- completed

jobs:

upload:

runs-on: ubuntu-latest

if: >

github.event.workflow_run.event == 'pull_request' &&

github.event.workflow_run.conclusion == 'success'

steps:

- name: 'Download artifact'

uses: actions/github-script@v3.1.0

with:

script: |

var artifacts = await github.actions.listWorkflowRunArtifacts({

owner: context.repo.owner,

repo: context.repo.repo,

run_id: ${{github.event.workflow_run.id }},

});

var matchArtifact = artifacts.data.artifacts.filter((artifact) => {

return artifact.name == "pr"

})[0];

var download = await github.actions.downloadArtifact({

owner: context.repo.owner,

repo: context.repo.repo,

artifact_id: matchArtifact.id,

archive_format: 'zip',

});

var fs = require('fs');

fs.writeFileSync('${{github.workspace}}/pr.zip', Buffer.from(download.data));

- run: unzip pr.zip

- name: 'Comment on PR'

uses: actions/github-script@v3

with:

github-token: ${{ secrets.GITHUB_TOKEN }}

script: |

var fs = require('fs');

var issue_number = Number(fs.readFileSync('./NR'));

await github.issues.createComment({

owner: context.repo.owner,

repo: context.repo.repo,

issue_number: issue_number,

body: 'Everything is OK. Thank you for the PR!'

});

How do I get x commit inside a PR workflow? What do all the different github sha’s mean?

If you are running in a workflow_run workflow, you will need to get the calling PR first. See I want to access the calling PR in a workflow_run workflow instead.

The default github.sha is a temporary commit representing the state of the repo should the PR be merged now. You probably want github.event.pull_request.head.sha. Read The many SHAs of a github pull request.

This section had been taken from the ‘Github Actions’ page of the conjure-oxide wiki

Test GitHub Actions Locally With act

This guide shows how to run GitHub Actions workflows locally using act. It is useful for fast feedback on CI changes without waiting for a remote run.

For details on installation and advanced usage, see https://nektosact.com/.

Quick start

- Install

act(see the official site for the latest install steps). - From the repo root, run a workflow event, for example:

act --container-architecture linux/amd64

This repo works best with the catthehacker/ubuntu:act-latest image. It includes common CI tooling and matches GitHub-hosted Ubuntu runners more closely than the default image. The act default image can be configured at runtime; see https://nektosact.com/ for details.

Common usage patterns

- Run a specific workflow file:

act -W .github/workflows/ci.yml --container-architecture linux/amd64

- Run a specific job:

act -j test --container-architecture linux/amd64

- Provide secrets locally (example):

act --secret-file .secrets --container-architecture linux/amd64

- Run the Vale job for pull requests:

act pull_request --job vale --container-architecture linux/amd64

In this repo, the vale job is part of the pull request workflows, so this command runs that job locally against a simulated pull_request event. It uses the recommended catthehacker/ubuntu:act-latest image by default, and you can configure the image at runtime; see https://nektosact.com/usage/runners.html#alternative-runner-images/ for details.

Limitations to keep in mind

- Workflow triggers: some events (

workflow_run,pull_request_target, scheduled jobs) do not behave exactly like GitHub and may need extra inputs or manual flags. - GitHub API usage: steps that call the GitHub API (releases, issues, PR comments, status checks) require valid tokens and may still diverge from real GitHub behavior.

- Deployment workflows: jobs that deploy (cloud credentials, OIDC, environment protection rules) are hard to reproduce locally and are better validated in a real GitHub run.

- Hosted services and caches: actions depending on GitHub-hosted caches or services may be no-ops or behave differently.

- Matrix and concurrency: complex matrices, concurrency groups, and reusable workflows can be partially supported but may require extra configuration or run differently.

When in doubt, use act for fast iteration, then confirm changes with a real GitHub Actions run.

Flatten

Usage in Essence

With one argument, flatten(M) returns a one-dimensional matrix containing all the elements of the input matrix.

With two arguments, flatten(n,M) the first n+1 dimensions are flattened into one dimension.

Warning

flatten(n,M)has not yet been implemented.

Expression variant

The Flatten variant of Expression in the AST has structure defined as:

Flatten(Metadata, Option<Moo<Expression>>, Moo<Expression>)

The return type and domain of Flatten is a matrix of the innermost elements of the flattened matrix’s return type or domain. This has not yet been implemented in the case where the dimensions to flatten are provided.

flatten rule

The flatten rule in crates/conjure-cp-rules/src/matrix/flatten.rs turns flatten expressions containing atomic matrix expressions into a flat matrix literal.

Coding Resources and Conventions

Why benchmark?

Benchmarking is an essential part of any coding project, especially when it is performance-oriented. While it can be a little daunting when first getting started, this guide aims to show that benchmarking can be integrated into conjure-oxide and its workflows with a little work.

criterion

By far, the most popular benchmarking tool currently available for Rust is the criterion crate. Based off the Haskell library of the same name, it is a statistics-based tool which aims to measure wall-clock time for individual functions. To get started on criterion benching in a rust project my_project, you first need to make a directory called benches, which Rust will recognise as holding all benchmarking files. Let’s now make a benchmark called my_bench.rs inside of my_project/benches

We now add the following changes to the crate’s cargo.toml file

#![allow(unused)]

fn main() {

[dev-dependencies]

criterion = "0.3"

[[bench]]

name = "my_bench"

harness = false

}Suppose that we now want to create function to benchmark the addition of two numbers (which, as expected should be very fast!). We add the following to my_bench.

#![allow(unused)]

fn main() {

use criterion::{Criterion, black_box, criterion_group, criterion_main};

pub fn add(x: u64, y: u64) -> u64 {

x + y

}

pub fn criterion_benchmark(c: &mut Criterion) {

c.bench_function("add 20 + 20", |b| {

b.iter(|| add(black_box(20), black_box(20)))

});

}

criterion_group!(benches, criterion_benchmark);

criterion_main!(benches);

}The .bench_function method creates an instance of a benchmark. Then the .iter method tells Criterion to repeatedly execute the provided closure. Finally, black_box is used to prevent the compiler from optimising away the code being benchmarked. To run the benchmark simply run cargo bench. Among other things, your terminal should show something alone the lines of:

Which shows the average wall-clock time, as well as providing some information on outliers and performance against previous benchmarks. For the full details, see

\target\criterion. The .html reports are especially good.

criterion is usually the right tool for most benchmarks, although there are issues. Due to the statistics-driven ethos of criterion, there is currently no one-shot support, with 10 samples being the minimum number of samples for a benchmark. Wall-clock time also gives little insight into where things are slowing in your code, and will not catch things like poor memory locality. Even more crucially, however, is how criterion performs in CI pipelines. The developer’s themselves say the following:

”You probably shouldn’t (or, if you do, don’t rely on the results). The virtualization used by Cloud-CI providers like Travis-CI and Github Actions introduces a great deal of noise into the benchmarking process, and Criterion.rs’ statistical analysis can only do so much to mitigate that. This can result in the appearance of large changes in the measured performance even if the actual performance of the code is not changing.“

As such, we need some other metric apart from wall-clock time to use in order to still run benchmarks in a CI pipeline. This is where the iai-callgrind crate comes in.

iai-callgrind

Iai-Callgrind is a benchmarking framework which uses Valgrind’s Callgrind and other to provide extremely accurate and consistent measurements of Rust code. It does not provide information on wall-clock time, instead focussing on metrics like instruction count and memory hit rates. It is important to note that this will only run on linux, due to the valgrind dependency. Let us create a benchmark called iai-bench in the benches folder. We add the following to cargo.toml

#![allow(unused)]

fn main() {

[profile.bench]

debug = true

[dev-dependencies]

iai-callgrind = "0.14.0"

criterion = "0.3"

[[bench]]

name = "iai-bench"

harness = false

}To get the benchmarking runner, we can quickly compile from source with cargo install --version 0.14.0 iai-callgrind-runner. To benchmark add using iai-callgrind we add the following to benches/iai-bench.rs.

#![allow(unused)]

fn main() {

use iai_callgrind::{main, library_benchmark_group, library_benchmark};

use std::hint::black_box;

fn add(x: u64, y:u64) -> u64 {

x+y

}

#[library_benchmark]

#[bench::name(20,20)]

fn bench_add(x: u64,y:u64) -> u64 {

black_box(add(x,y))

}

library_benchmark_group!(

name = bench_fibonacci_group;

benchmarks = bench_add

);

main!(library_benchmark_groups = bench_fibonacci_group);

}And again run using cargo bench. To specify only running this benchmark we can instead do cargo bench --bench iai-bench. Upon running, you should see something like the following.

As you can see,

iai is lightweight, fast and can provide some really accurate statistics on instruction count and memory hits. This makes iai perfect for benching in CI workflows!

Workflows

Once benchmarking is established, workflows are not too difficult to add too. As discussed before, for CI workflows iai should be used, and not criterion. Take the following example from the tree-morph crate. I will put the code below and then briefly explain each portion. It should not be too difficult to adapt to other benchmarks.

name: "iai tree-morph Benchmarks"

on:

push:

branches:

- main

- auto-bench

paths:

- conjure_oxide/**

- solvers/**

- crates/**

- Cargo.*

- conjure_oxide/tests/**

- .github/workflows/iai-tree-morph-benches.yml

pull_request:

paths:

- conjure_oxide/**

- solvers/**

- crates/**

- Cargo.*

- conjure_oxide/tests/**

- .github/workflows/iai-tree-morph-benches.yml

workflow_dispatch:

jobs:

benches:

name: "Run iai tree-morph benchmarks"

runs-on: ubuntu-latest

timeout-minutes: 10

strategy:

# run all combinations of the matrix even if one combination fails.

fail-fast: false

matrix:

rust_release:

- stable

- nightly

steps:

- uses: actions/checkout@v4

- uses: dtolnay/rust-toolchain@stable

with:

toolchain: ${{ matrix.rust_release }}

- name: "Cache Rust dependencies"

uses: actions/cache@v4

with:

path: |

~/.cargo/registry

~/.cargo/git

target

key: ${{ runner.os }}-cargo-${{ matrix.rust_release }}-${{ hashFiles('**/Cargo.lock') }}

restore-keys: |

${{ runner.os }}-cargo-${{ matrix.rust_release }}-

- name: Install Valgrind

run: sudo apt-get update && sudo apt-get install -y valgrind

- name: Install iai-callgrind-runner

run: cargo install --version 0.14.0 iai-callgrind-runner

- name: Run tree-morph benchmarks with iai-callgrind

run: cargo bench --manifest-path crates/tree_morph/Cargo.toml --bench iai-factorial --bench iai-identity --bench iai-modify_leafs > iai_callgrind_output.txt

- name: Upload artefact

uses: actions/upload-artifact@v4

with:

name: iai-callgrind-results-${{ matrix.rust_release }}

path: iai_callgrind_output.txt

Some comments:

namejust tells GitHub what to call the workflowsontells GitHub when to run the workflowjobsis the core of the workflow:strategyspecifies that we want to run both nightly and stable rust- In

steps, we first check out the repository code and set up a specific stable Rust toolchain based on a matrix variable, and then cache Rust dependencies. Next we install the necessary things for valgrind to run, before running benchmarks. We tell the virtual machine to an.txtfile and upload it as an artefact.

Crate Structure

Overview

We follow a “monorepo” approach; That is, a number of related modules are developed in a single repository. This makes it easier to integrate them and test our entire project all at once.

Our repository can be broken down into four key components:

- The user-facing

conjure-oxidecommand line tool and library, and its integration tests. This is stored inconjure-oxide/conjure_oxide. - The AST type definitions, rewrite engine, and related implementation code.

These are broken up into separate crates and stored in

conjure-oxide/crates/. - Rust bindings for solvers; That is, wrapper code and build files that enable us to:

-

Compile solvers (which are developed outside of this project and written in other languages, such as C++) together with our project

-

“Hook into” solver methods and call them from inside our Rust code

These are stored in

conjure-oxide/solvers/on a per-solver basis.

-

- Various other scripts and tools that we use to build, document and test our project.

These are stored in

conjure-oxide/tools/.

CLI Tool

The command-line conjure-oxide tool is the final product we ship to users.

All logic related to the CLI, parsing user input, and displaying solutions is implemented in conjure-oxide/conjure_oxide/src.

This module (called a “crate” in Rust) also re-exports some type definitions and functions from conjure_core and other crates.

This is our public, user-facing API that could eventually be used by other people in their projects. At the moment, our project is under development and there is no stable API specification.

There are some examples/ illustrating how various parts of the project are used.

Finally, for historical reasons, this folder contains some utility code that may be refactored or moved elsewhere in the future.

Tests

Our suite of integration tests runs automatically on every commit to the main repository.

It tests conjure-oxide end-to-end: parsing an example Essence file, running the rewriter, calling the solver, and validating the solution.

The test files are stored in conjure_oxide/tests/integration/, sorted into sub-directories.

Code to run our project on these files is contained in integration_tests.rs.

Crates

Most of our implementation is contained in the crates/ directory. Here is an overview of what each crate does:

conjure_corecontains:- Our rule engine implementation (

conjure_core/src/rule_engine/) - The definition of our Abstract Syntax Tree (AST) for Essence; That is, the Rust representation of an Essence program (

conjure_core/src/ast) - The generic

SolverAdaptorinterface for interacting with solvers (conjure_core/src/solver) - Concrete implementations of

SolverAdaptorfor each solver we support, such as Minion or RustSAT (conjure_core/src/solver/adaptors) - Other miscellaneous types and utilities

- Our rule engine implementation (

conjure_rulesdefines rules and rule sets for our rewrite engine to use. Rules are grouped into files and directories based on their purpose: for example, all rules for normalising boolean expressions are inconjure_rules/src/normalisers/bool.rsconjure_rule_macrosimplements the#[register_rule(...)]and#[register_rule_set(...)]procedural macros. See also: Wiki - Rules and RuleSets.conjure_essence_parserimplements the native Rust parser for Essence. It uses our Tree-sitter grammar, which is defined separately intree-sitter-essenceconjure_essence_macrosimplements theessence_expr!procedural macro.enum_compatability_macrois a macro that allows us to indicate whether certain features of Essence are compatible with certain solvers, for documentation purposes.randicheckis a somewhat separate project developed by Ty (@TAswan) and others. It aims to use Conjure to automatically validate Haskell code and generate minimal failing tests. (TODO is this accurate?)tree_morphis a generic library for tree transformations. In the future, it will replace our current rule engine implementation.

Also:

- The

uniplatecrate, which we use to traverse the AST, used to be part of this repository. It is now maintained separately at https://github.com/conjure-cp/uniplate.

Dependencies

The dependencies between crates are shown bellow.

An arrow A -> B means that A imports from B. The diagram is made using Graphviz, and its source code is located TODO.

Functional Rust

Functional Rust

Consider the following oft-quoted statement about Rust:

Rust is blazingly fast and memory-efficient: with no runtime or garbage collector. Rust’s rich type system and ownership model guarantee memory-safety and thread-safety — enabling you to eliminate many classes of bugs at compile-time.

Taken specifically from the Rust Foundation’s1 page talking about why Rust is a good language to use.

But let us focus on the implications of this statement on Conjure Oxide specifically. The key details that one needs to know here are that, despite arguably being imperative, Rust adopts many functional programming concepts in its design2. This is significant because the Conjure Oxide codebase makes extensive use of these functional programming concepts.

Making use of them allows the codebase to leverage Rust’s type system to eliminate error cases through coding style, making the code more robust. It also makes the code easier to write, because Rust’s safety system enforces function return types.

Result

By using the Result type, functions can explicitly state success or failure. This also allows functions to call each other linearly in a safe manner3.

This enables the code to be written so that functions can do essentially whatever they need to do as side effects, as long as they record a success or failure result. The error type allows error propagation in a more sophisticated way than exit codes, while still being more efficient than exceptions. This structure is used in a lot of places in this codebase, because it allows for functions to be treated uniformly most anywhere4, meaning they can be called anywhere by any originator (or caller) function without having to resort to unsafe rust and sticking to the type safety scheme.

This also allows for the use of the ? operator5, which means that errors can be propagated up the call stack lazily.

Consider the following example, written imperatively in Java-like pseudocode:

public void fnReturnsError(a,b) {

...Some Code...

// might throw error1

int foo1 = maybeReturnsMyError1(a);

...Some More Code...

// might throw error2

int foo2 = maybeReturnsMyError2(b);

...Wow, when will this code end...

// might throw error3

int foo3 = maybeReturnsMyError3(a,b);

}

Alternatively, consider a lower-level language like C, which is far more comparable to Rust in its uses and applications, where there are no ‘error types’ and errors behave like either enums or just integral exit codes.

In a C-like language, a similar example would look like this:

int returnAnExitCode(int a, int b) {

int exit_code = 0;

...Some Code Here Too...

int exit_code = maybeReturnsMyExitCode1(a);

...Some More Code...

int exit_code = maybeReturnsMyExitCode2(b);

...Why, if it isn't yet more code ...

int exit_code = maybeReturnsMyExitCode3(a,b);

return exit_code

}

This means that, like rust, an error is not an exception getting ‘thrown’, but a value that is being returned. However, unlike rust, they require these exit codes to be set and returned at the end.

This also means that the function calls must be done on some top-level data structure, and that global structure needs to be accessed at the end rather than being able to simply access the top-level data structure through the returned value.

In this type of application, Rust offers two advantages:

- The

Returntype, even though it records a success/failure, can be pattern-matched using thematchconstruct to access the data structure which the functions are performing side effects on. - The aforementioned

?operator can be used to force the code to return an error type as soon as it is ‘raised’ – that is, returned by one of the functions in the call stack.

This example would look like this in rust-like functional code:

#![allow(unused)]

fn main() {

fn returns_a_result(a: i32, b: i32) -> Result<i32, MyError> {

// Some code here...

// The ? operator propagates errors immediately

let foo1 = maybe_returns_my_error1(a)?;

// Some more code...

let foo2 = maybe_returns_my_error2(b)?;

// Even more code...

let foo3 = maybe_returns_my_error3(a, b)?;

// If we get here, all operations succeeded

Ok()

}

}Side Effects

Throughout the above section, references are made to changing things through ‘function side effects’. Let us dive deeper into what this actually means.

Functions are fairly complex, but here is what Alonzo Church has to say about what they do, taken from his paper The Calculi of Lambda Conversion:

A function is a rule of correspondence by which when anything is given (as argument) another thing (the value of the function for that argument) may be obtained. That is, a function is an operation which may be applied on one thing (the argument) to yield another thing (the value of the function).

In quite an abstract sense, this passage establishes that a Function is simply a mapping from a set of inputs to a set of outputs.

Now, knowing this, the term side effect also begins to make sense – any persistent effects of a function which are not in the returned value are side effects. In a general sense, this is things like writing to files, printing and so on. More specifically in conjure oxide, almost all processing is done by way of side effects. While this makes sense even in an imperative context, imperative code can still have some functions chained together in ways that are only possible if the data structure being affected by them is actually returned by them. In rust, specifically when this side-effect-only style of programming is used, programs end up looking quite a bit more concise and readable.

Now, having all of this knowledge in the back of your head, you will understand why the following things must be kept in mind:

- Make the greatest effort to treat Rust as a functional language when programming in this (and indeed any) codebase. Not only does this lead to cleaner, more concise and (arguably) more readable code, it actually helps avoid errors and edge cases.

- Learn to leverage the Rust type and safety system instead of wrestling with by writing code that uses features in the language like

Result<T,E>andOption<T>. This may involve learning where to use these instead of doing things that one cannot do in imperative languages like C. - Rust code is only properly ‘safe’ if it uses the type system properly, meaning it is a good idea to avoid things like returning null, unwrapping

Resultinstances6.

-

Who are, of course, an entirely unbiased source ↩

-

Rust’s original implementation language was OCaml, which is functional ↩

-

Similar to calling functions in dynamically typed languages like Python, but with enforced types ↩

-

This is because, at their core, all functions are essentially of the type (..) -> ReturnType. Making the return type standard allows for all functions to be of similar types. ↩

-

Which immediately propagates the error up through the call stack in rust. ↩

-

This is what caused the infamous cloudflare outage. ↩

Overview

The parser converts incoming Essence programs to Conjure Oxide to the Model Object that the rule engine takes in. The relevant parts of the Model object are the SymbolTable and Expression objects. The symbol table is essentially a list of the variables and their corresponding domains. The Expression object is a recursive object that hold all the constraints of the problem, nested into one object. The parser has two main parts. The first the tree-sitter-essence crate, which is a general Essence parser using the library tree-sitter. The second part is the conjure_essence_parser crate which is Rust code that uses the grammar to parse Essence programs and convert them into the above-mentioned Model object.

Tree Sitter Grammar

Tree-sitter is a parsing library that creates concrete syntax trees from programs in various languages. It contains many languages already, but Essence is unfortunately not one of them. Therefore, this crate contains a JavaScript grammar for Essence, which tree-sitter uses to create a parser. The parser is not specific to Conjure Oxide as the grammar merely describes the general Essence language, but it is used in Conjure Oxide and currently covers only parts of the Essence language that Conjure Oxide deals with and has tests written for. Therefore, it is not extensive and relatively simple in structure.

General Structure

At the top level, there can be either find statements, letting statements, and constraint statements. Find statements consist of the keyword find, one or more variables, and then a domain of either type boolean or integer. Letting statements have the keyword letting, one or more variables, and an expression or domain to assign to those variables. Constraints contain the keyword such that and one or more logical or numerical expressions which include variables and constants.

Expression Hierarchy